Table of contents

- Introduction

- Quick Mental Model

- The Signal Processing Foundation

- The Equivalence of Prediction and Compression

- Hallucinations as Compression Artifacts

- Decoding AI Quirks Through Information Theory

- Recontextualizing the AI Engineering Stack

- Conclusion

- References

Introduction

Many explanations of large language models rely on human metaphors: they "know," "think," "reason," or "get confused." Such language is convenient, but it obscures the underlying mechanics. A more precise way to understand both the strengths and the failure modes of LLMs is to treat them not as minds, but as compression systems.

This perspective immediately clarifies several familiar behaviors:

- why LLMs can sound fluent while being wrong,

- why they are often better at code structure than exact arithmetic,

- why retrieval, fine-tuning, and prompt design work at all.

At a high level, an LLM does not store the world in the manner of a database preserving exact records. Instead, it stores a compressed statistical representation of patterns in language. As with any lossy compression system, broad structure is preserved more reliably than fine-grained detail.

If you remember only one sentence from this article, make it this one:

An LLM is something like a JPEG for knowledge: incredibly useful, often faithful at a glance, and unreliable exactly where sharp details matter most.

Quick Mental Model

Before turning to details, it is useful to establish an intuitive version of the argument.

Consider three different ways of preserving information:

| System | What it keeps well | What it loses first |

|---|---|---|

| ZIP archive | Exact bytes | Almost nothing, but compression gains are limited |

| JPEG / MP3 | Overall appearance or sound | Fine detail, crisp edges, subtle texture |

| LLM | Broad language patterns, common facts, familiar structures | Precise facts, niche details, exact numbers |

This table captures the central argument in compact form.

LLMs do not memorize the internet line by line. They compress recurring structure into parameters and later reconstruct likely continuations from that compressed representation. In many cases, that reconstruction is remarkably effective. However, when the source information requires high precision, the model may produce a smooth approximation rather than the exact original.

Memory device: think "blur, predict, reconstruct"

As a compact mnemonic, the process can be summarized in three steps:

- Blur: training compresses huge amounts of text into a smaller parameter space.

- Predict: the model learns what usually comes next.

- Reconstruct: generation is the model rebuilding likely text from compressed patterns.

This is why an LLM can be fluent without being exact.

The Signal Processing Foundation

In 1807, Jean-Baptiste Joseph Fourier introduced one of the most powerful ideas in applied mathematics:

Fourier's theorem: Any continuous signal, regardless of its apparent complexity, can be decomposed into a sum of simpler orthogonal sine and cosine waves.

At first this may appear abstract, but it is precisely the principle on which modern compression relies.

When an audio file becomes an MP3, the encoder transforms sound into a frequency-oriented representation and throws away pieces humans are less likely to notice. For example, a human ear cannot hear sound above 20 kHz - so this high-frequency information can be discarded without a noticeable loss in quality. Similarly, too quiet sounds are not perceived, so they can be removed as well. Futhremore, the ear cannot recognize sounds that are masked by louder sounds, so those can be removed too. The result is a smaller file that still sounds good to human listeners. As a result, MP3 can compress audio by a factor of 10 or more while retaining much of the original's perceptual quality.

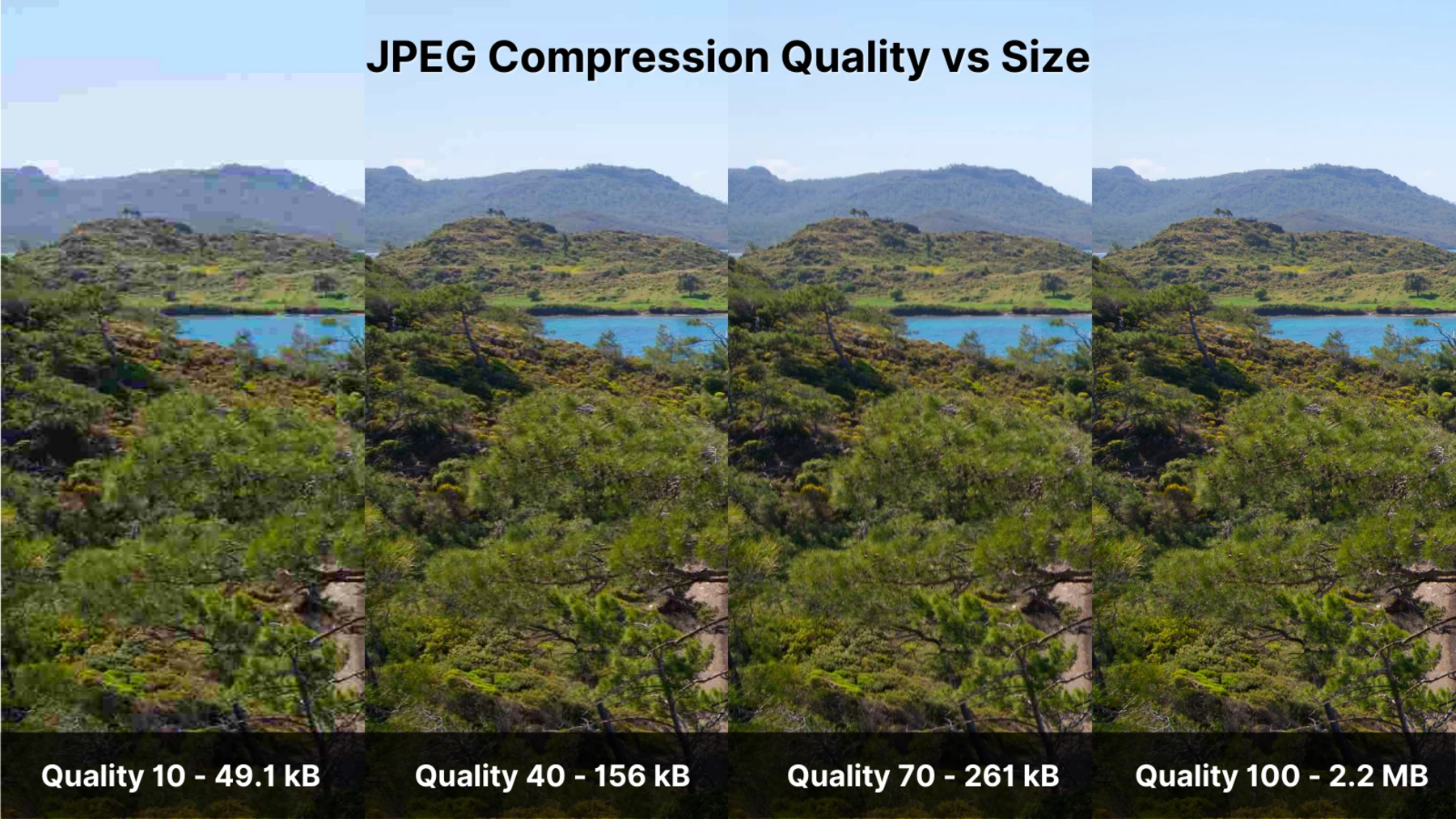

JPEG does something similar for images. It breaks an image into blocks, converts them into frequency-like coefficients using the Discrete Cosine Transform (DCT), and quantizes away high-frequency detail that the eye is less sensitive to.

In both cases, the result is smaller, cheaper to store, and often visually or acoustically sufficient. However, it is no longer the original. It is an approximation reconstructed from a compressed representation.

This is the essential setup for understanding LLMs: useful systems do not always preserve reality faithfully. In many cases, they retain a cheaper and blurrier representation that remains sufficient for most practical purposes.

The Equivalence of Prediction and Compression

To connect this principle to text, it is necessary to turn to Claude Shannon.

In his 1948 work on information theory, Shannon showed a deep relationship between prediction and compression. If a system can predict what comes next in a sequence, then it can encode that sequence more efficiently. The more predictable the data, the fewer bits you need to describe it. In information theory terms, lower surprise means lower entropy .

The next symbol prediction and data compression - mathematically equivalent tasks. A good predictor is also a good compressor.

This matters because modern LLMs are trained with one core objective: predict the next token as accurately as possible by minimizing cross-entropy loss. Although this appears to be a language task, mathematically it is also a compression task.

When researchers train on terabytes of text and distill that corpus into a model with billions of parameters, they are compressing vast regularities of human language into a compact latent representation. The model does not contain an organized archive of original documents. Instead, it contains a dense summary of statistical structure.

Sticky idea: why next-token prediction and compression are cousins

If I say:- "peanut butter and ___"

- "2 + 2 = ___"

- "once upon a ___"

the next token can be predicted with fairly high confidence. This means such phrases can be encoded efficiently because little uncertainty remains. In effect, a good predictor is also a good compressor.

Hallucinations as Compression Artifacts

Once LLMs are viewed as compression systems, hallucinations become substantially less mysterious.

When a JPEG is compressed too aggressively, it usually preserves the broad structure of a scene: sky, face, building, road. What disappears first are sharp edges and delicate details. During decompression, the image decoder fills in what is missing with the best approximation available.

This is closely analogous to what happens when an LLM "makes something up."

Suppose the model has strong evidence that a sentence should contain a date, citation, URL, or paper title, but the exact detail was not preserved clearly in its parameters. It still tries to complete the pattern. The result can be syntactically perfect, semantically plausible, and factually false.

Consider a street sign in a heavily compressed photograph. The blue sky remains recognizable, and the presence of text remains obvious. However, the precise edges of the letters become smeared, and the decoder introduces a fuzzy border around them. The system preserves the fact that something belongs there, but not its exact form.

LLMs exhibit the exact same behavior with semantic information:

| Type of information | Compression outcome | Typical LLM behavior |

|---|---|---|

| Common concepts and repeated language patterns | Preserved well | Fluent, coherent answers |

| Familiar historical or technical structure | Usually preserved | Good summaries and explanations |

| Exact numbers, dates, citations, URLs, niche details | Preserved poorly | Plausible but invented specifics |

Hallucination is therefore often better understood as a reconstruction error rather than as deception. The model is not lying in the human sense; it is filling gaps with the statistically most likely continuation.

Reader shortcut:

Broad pattern in, broad pattern out. Sharp detail in, fuzzy detail out.

Decoding AI Quirks Through Information Theory

Thinking of LLMs as "lossy text codecs" also explains several of their most counterintuitive behaviors.

Why Code Often Works Better Than Arithmetic

Code is full of repetition, local structure, and predictable syntax. A line such as for i in range(len(arr)): exists within a dense ecosystem of recurring patterns. That makes it relatively compressible and easier to reconstruct.

Arithmetic is different. The expression has exactly one correct answer. There is no room for approximation. If the model is relying on compressed statistical patterning rather than executing an algorithm, even a small deviation becomes an incorrect result.

Why Bigger Models Usually Hallucinate Less

Increasing parameter count is not magic. It is closer to increasing bitrate in audio compression or raising the quality setting in JPEG compression. More parameters provide more capacity to preserve finer distinctions. Reconstruction improves because the model has more room to store detail.

This does not make the system lossless. It merely reduces the severity of the blur.

What LLM Temperature Really Does

In the context of LLMs, temperature is an inference-time setting that controls how deterministically or how broadly the model samples from its next-token probabilities. It is often described in anthropomorphic language, as though it controls "creativity." A more technical description is that it reshapes the output probability distribution before sampling.

- Low temperature concentrates probability on the most likely tokens.

- High temperature spreads probability across more candidates.

This may appear as creativity, but mathematically it is closer to sampling from a flatter distribution. The decoder is permitted to consider less probable continuations.

Another memory device: "code compresses, arithmetic resists"

If one asks why LLMs can write a React component yet still fail at a multiplication problem, the concise answer is:

- Code has reusable structure.

- Arithmetic demands exact execution.

Compression is better suited to the first case than to the second.

Recontextualizing the AI Engineering Stack

Once the compression framing is adopted, many AI engineering practices become less mysterious and more mechanically intelligible.

Retrieval-Augmented Generation (RAG)

RAG works because it supplements lossy internal memory with lossless external memory. Instead of asking the model to reconstruct a fact from parameters alone, you hand it the exact document at inference time.

This is analogous to placing crisp source text on top of a blurry background image. The model no longer needs to infer the high-frequency detail because it has been provided directly.

Fine-Tuning

Fine-tuning changes what the model spends its representational budget on. If the base model is a broad compression of public language, fine-tuning tells it to preserve one region of that space with greater fidelity.

In practice, this means the model may become more capable in a specialized domain because more of its capacity is devoted to patterns from that domain.

Prompt Engineering

Prompting is often described as "talking to the AI the right way," but a more grounded view is that a prompt constrains which patterns the decoder should activate.

A strong system prompt does not inject new knowledge into the model. Rather, it narrows the reconstruction path. In effect, it instructs the decoder to operate from one region of latent space rather than another.

| Technique | Compression interpretation | Practical benefit |

|---|---|---|

| RAG | Add lossless facts at runtime | Reduces factual reconstruction errors |

| Fine-tuning | Reallocate representational capacity | Improves domain-specific fidelity |

| Prompting | Constrain which latent patterns are activated | Improves format, style, and task focus |

Conclusion

Large language models are not minds in the ordinary sense, but neither are they trivial parrots. They are high-capacity parametric memory systems that compress language, patterns, and correlations into a form that can later be decoded into fluent text.

This lens makes their strengths less mystical and their failures less surprising. They perform well where broad structure is sufficient and become fragile where exactness is essential. In other words, they behave as lossy systems behave.

There is also a useful human parallel: biological memory is reconstructive rather than perfectly archival. Humans routinely compress experience, discard detail, and later fill gaps with plausible reconstruction. LLMs do something analogous, though with tokens rather than neurons.

If the mysticism is set aside in favor of the mathematics, a more useful engineering intuition emerges:

- treat model memory as compressed,

- treat hallucinations as artifacts,

- treat external retrieval as a way to restore missing detail.

This is a more reliable foundation for building AI systems than pretending the model "just knows."

One-minute recap

- LLM training is next-token prediction.

- Next-token prediction is tightly linked to compression.

- Compression preserves broad patterns better than sharp details.

- Hallucinations are often the semantic equivalent of JPEG artifacts.

- RAG, fine-tuning, and prompting all make more sense once you see the model as a lossy memory system.